acceptable threshold for disk usage varies based on the cluster node type are delivered using service-principal credentials. Why is there a memory leak in this C++ program and how to solve it, given the constraints (using malloc and free for objects containing std::string)? average blocks read for all slices. Youre limited to retrieving only 100 MB of data with the Data API. While most relational databases use row-level locks, Amazon Redshift uses table-level locks. The following command shows you an example of how you can use the data lake export with the Data API: You can use the batch-execute-statement if you want to use multiple statements with UNLOAD or combine UNLOAD with other SQL statements. The number or rows in a nested loop join.

On the AWS Console, choose CloudWatch under services, and then select Log groups from the right panel. Choose the logging option that's appropriate for your use case. You can fetch results using the query ID that you receive as an output of execute-statement. We also explain how to use AWS Secrets Manager to store and retrieve credentials for the Data API. Dont retrieve a large amount of data from your client and use the UNLOAD command to export the query results to Amazon S3.

Running queries against STL tables requires database computing resources, just as when you run other queries. If you want to retain the log data, you will need to periodically copy it to other tables or unload it to Amazon S3. You can configure audit logging on Amazon S3 as a log destination from the console or through the AWS CLI. user or IAM role that turns on logging must have Amazon Redshift It gives information, such as the IP address of the users computer, the type of authentication used by the user, or the timestamp of the request. We are continuously investing to make analytics easy with Redshift by simplifying SQL constructs and adding new operators.

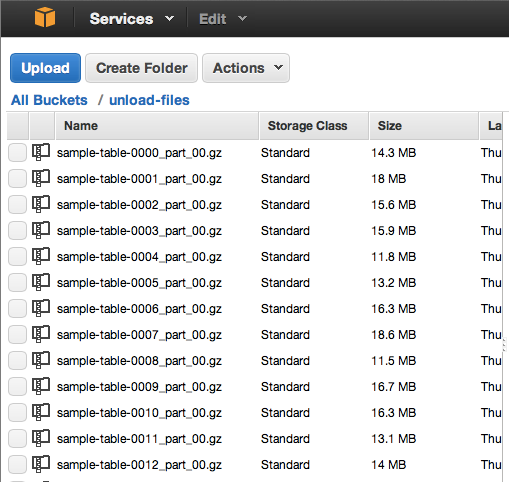

If you've got a moment, please tell us what we did right so we can do more of it. For information about searching If the queue contains other rules, those rules remain in effect. Either the name of the file used to run the query For more information, see Configuring auditing using the console. We will discuss later how you can check the status of a SQL that you executed with execute-statement. If all of the predicates for any rule are met, that rule's action is There are no additional charges for STL table storage. AWS Big Data Migrate Google BigQuery to Amazon Redshift using AWS Schema Conversion tool (SCT) by Jagadish Kumar, Anusha Challa, Amit Arora, and Cedrick Hoodye. As an additional safeguard, the key itself is also encrypted with a root key that is regularly rotated.All rights reserved. If you're using server-side encryption with S3-managed encryption keys, then your S3 bucket encrypts each of its objects with a unique key. If you receive a 403 Access Denied error from your S3 bucket, confirm that the proper permissions are granted for your S3 API operations: "Version": "", Verify that the IAM role assigned to the Amazon Redshift cluster is using the correct trust relationship.Verify that there are no trailing spaces in the IAM role used in the UNLOAD command.Verify that the IAM role is associated with your Amazon Redshift cluster.If the database user isn't authorized to assume the IAM role, then check the following: Iam_role 'arn:aws:iam::0123456789:role/redshift_role' Resolution DB user is not authorized to assume the AWS IAM Role error To 's3://testbucket/unload/test_unload_file1' This error might happen when you're trying to run the same UNLOAD command and unloading files in the same folder where data files are already present.įor example, you get this error if you run the following command twice: unload ('select * from test_unload') Consider using a different bucket / prefix, manually removing the target files in S3, or using the ALLOWOVERWRITE option. Specified unload destination on S3 is not empty ERROR: Specified unload destination on S3 is not empty. You can also specify whether a compressed gzip file should be filed. Unload the text data in either a delimited or fixed-width format (regardless of the data format used while being loaded). Note: Use the UNLOAD command with the SELECT statement when unloading data to your S3 bucket. When unloading data from your Amazon Redshift cluster to your Amazon S3 bucket, you might encounter the following errors:ĭB user is not authorized to assume the AWS Identity and Access Management (IAM) Role error error: User arn:aws:redshift:us-west-2::dbuser:/ is not authorized to assume IAM Role arn:aws:iam:::role/Ĥ03 Access Denied error (500310) Invalid operation: S3ServiceException:Access Denied,Status 403,Error AccessDenied,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed